AI Chips Technology Trends & Landscape (GPU + TPU + FPGA + Startups)

Major tech companies invest billions in AI chip development. Even Microsoft and Facebook are onboard with Intel FPGA in accelerating their hardware infrastructures. There are a handful of startups that are already unicorns but there are sad stories like Wave Computing that filed for bankruptcy after raising 187 million in 3 years. In this series, we will cover about 30 companies. We will focus on the technology landscape with an emphasis on identifying future advancements and trends.

This series will be split into 3 parts. The first article looks at the development trends for GPU, TPU, FPGA, and Startups. The first three categories represent the largest market share in AI acceleration. We will focus on what vendors have been improving. Hopefully, it tells us where they may go next and the technology bottlenecks are. In the second half of this article, we look at novel approaches popular by startups. In particular, many of them move away from instruction flow designs to dataflow designs. This is a major paradigm shift that can change the AI chip direction completely. So let’s spend some time studying it.

Nvidia GPU

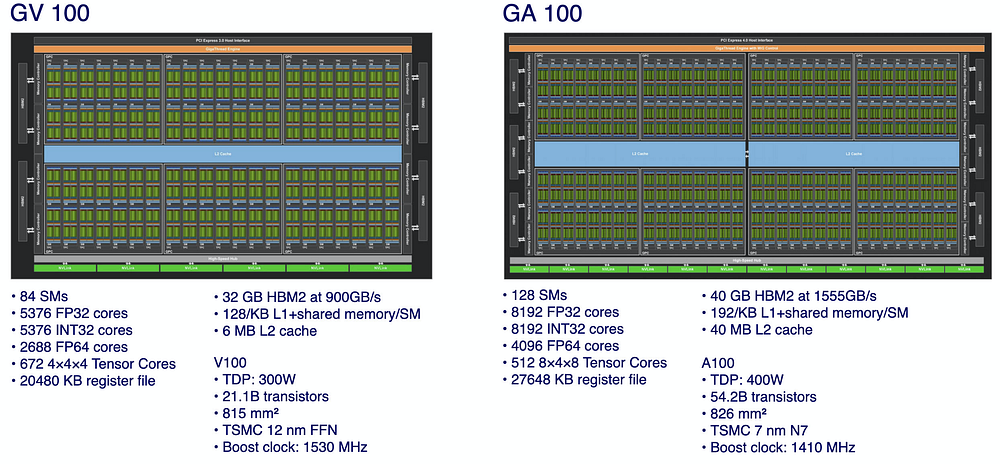

GV100 (Volta architecture) is released in late 2017 while GA100 (Ampere architecture) is released in 2020.

GA 100 GPU uses the TSMC 7 nm fabrication instead of the 12 nm process in GV 100. While their die sizes are about the same, the number of Streaming Multiprocessors (SMs) in GA100 is increased by 50% to 128 and the number of FP32 cores is increased from 5376 to 8192. The new Gen3 Tensor Cores perform 8×4 by 4×8 matrix multiplication instead of 4×4 by 4×4. (Details)

So far, these trends are pretty expected in many AI chips — more cores with better matrix multiplication units targeted for deep learning (DL). But there are some noticeable design enhancements.

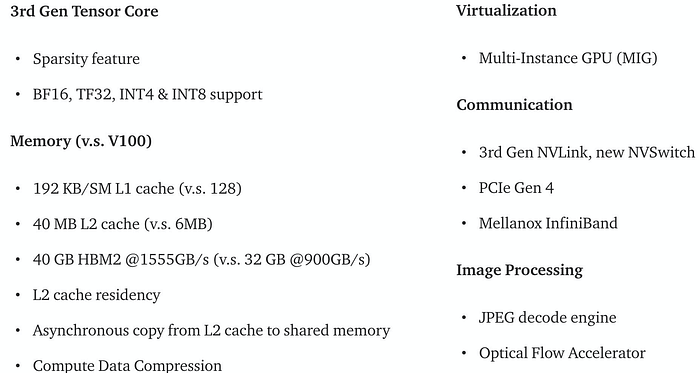

First, it addresses potential memory bottlenecks. The L2 level cache increases un-proportionally from 6MB to 40MB. The L1 cache per SM increases by 50%. In addition, developers have more control over what data to be cached on L2 and the flexibility of by-passing L1 in copying data directly to the SM shared memory.

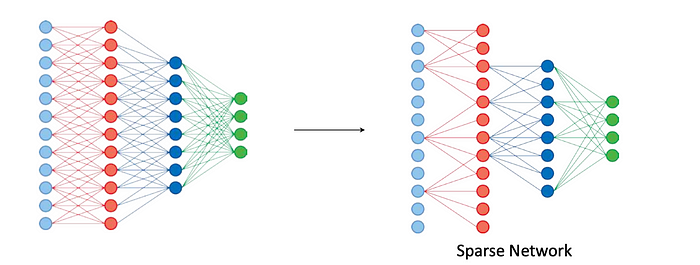

Second, DNNs (deep neural networks) weight sparsity is heavily exploited. After data compression, the data volume transmitted between the off-chip memories and the on-chip cache decrease significantly by 2x to 4x. With this sparsity, the Tensor Core is redesigned for even faster operations.

Third, more data type (BF16, INT4, TF32) is supported. In DL, the general trend is 16-bit matrix arithmetic with the value range equals FP32 for training and hopefully even 8 bit for inference. As shown below, some improvements are in one order of magnitude for lower precision arithmetics.

Fourth, faster inter-GPU communications to handle model training that cannot fit into one GPU.

Fifth, new features are created, like video encoding/decoding, for end-to-end solutions.

These trends are very important as we look at many AI companies. These are the same problems that chip designers are solving but just not necessarily the same solution. Sparsity and memory bandwidth will remain critical for future development. But besides hardware support in weight sparsity, data sparsity, and weight updates will be heavily studied (more on this in part 2).

In addition, NVidia is the most mature AI chips provider. As dealing with real customer needs, GPU virtualization (MIG feature turns a single GPU into multiple virtual GPUs) is added for better utilization in cloud-based inferencing.

For reference, these are the magnitude of performance improvements made in A100 GPU over V100 for the specific enhancements.

Google TPU

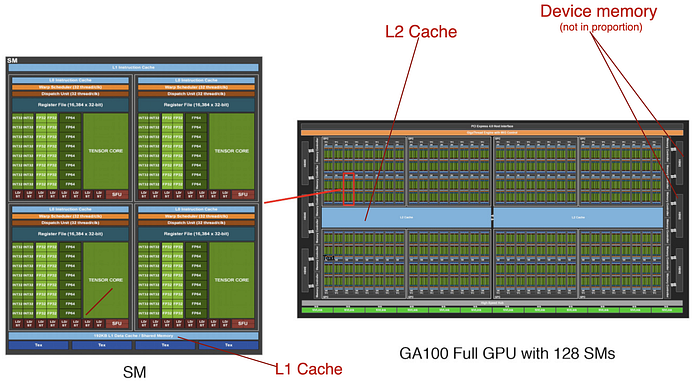

A general GPU provides the programmability that many projects may consider as “fat”. So it comes back to the question of whether this hurts or not. For Google, many servers are allocated to solving specific problems. Small improvements can save millions. Specifically, GPU consumes more energy than ASIC (Application-specific integrated circuit) and has higher latency.

Chip companies can design ASIC chips for maximum efficiency. But development costs are expensive and not adaptable to changes. Since the breakout success of AI in 2012, Google has gathered abundant experience in narrow down these design requirements. In fact, it concludes that Google TPU just needs high throughput for matrix multiplication, activation, normalization, and pooling.

TPU (details) takes away many control logic, in particular, dealing with instruction fetching and scheduling. It shifts this work to the CPU host. TPU simply acts as a coprocessor and provides vertical instructions.

This leads to a very important design trend in general.

Works, like instruction scheduling, optimization and resource assignments is shifted to the runtime library in CPU and the compiler.

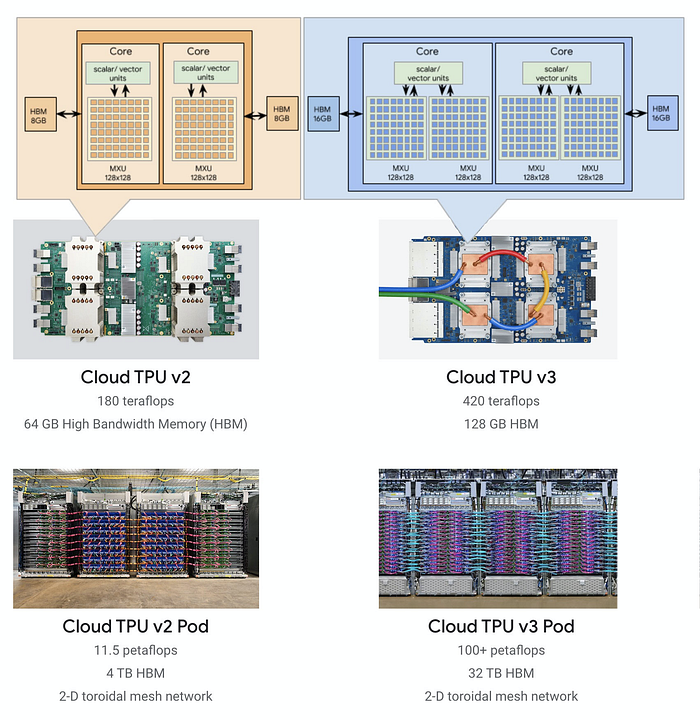

As shown in the diagram below, TPU v3 doubles the number of cores than v2, and TFLOPs is a little bit more than double. The coming TPU v4 will double in performance again (2.7x). The throughput of its matrix multiplication units has been more than double. Not much else is known yet but it will not be surprising if the number of cores is double or the matrix multiplication size has increased. Unspecified advances in interconnect technology in increasing memory bandwidth is also mentioned. This reinforces the memory constraints that many designers are facing when pushing higher TOPs.

Google has the monetary resource, powers, and wills to build a vertical solution. Their own datacenters and cloud services already provide a mature market for TPUs. Keep things focus, keep it simple, and optimize what matters seem to be the key focus for TPU.

Intel FPGA

However, the rigidity of ASIC bothers companies like Facebook or Microsoft. For better flexibility in designs and options, they are looking into solutions like FPGA. The hardware design for GPU and TPU cannot be changed. But for decades, FPGA has allowed hardware designers for reconfigurable ASIC design that can be reprogrammed in ∼20ms.

FPGA Overview (Optional)

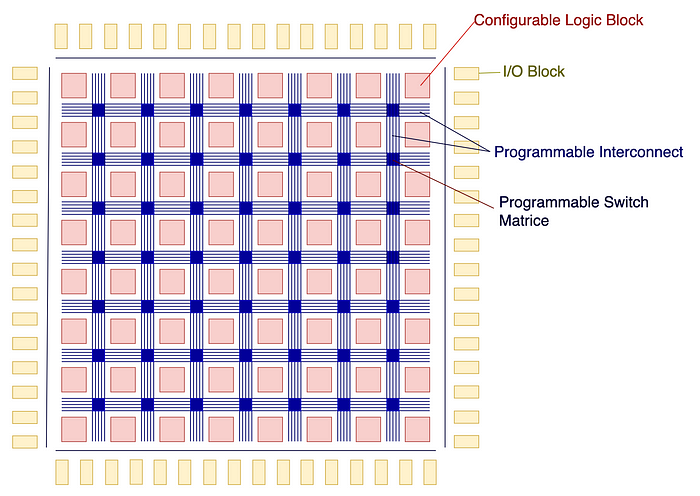

Software engineers are less familiar with FPGA. So let’s have a simple overview. FPGA contains an array of blocks for logic and arithmetic functions. FPGA has put many thoughts on its reconfigurability in creating custom functions in each block. In addition, these blocks can be connected through programmable interconnect to build custom features with specific concurrency, latency, I/O throughput, and power consumption.

FPGA also provides blocks, like those for local memory and IP blocks (a.k.a. reusable hardware cell design) for vertical solutions. Particularly, DSP blocks, optimizing vector and multiplications, have been repurposed for ML/DL acceleration.

Intel FPGA

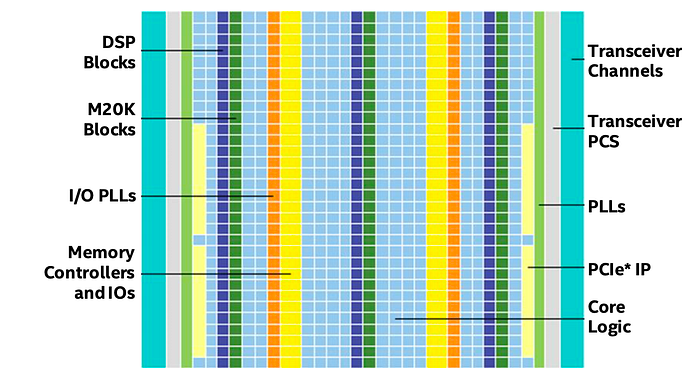

Microsoft Project Brainwave uses Intel Stratix 10 FPGA to accelerates DNN inferencing in services like Bing’s intelligent search features and Azure. Other Intel FPGA product lines are offered, including Agilex (high-end) and Arria for different market segments.

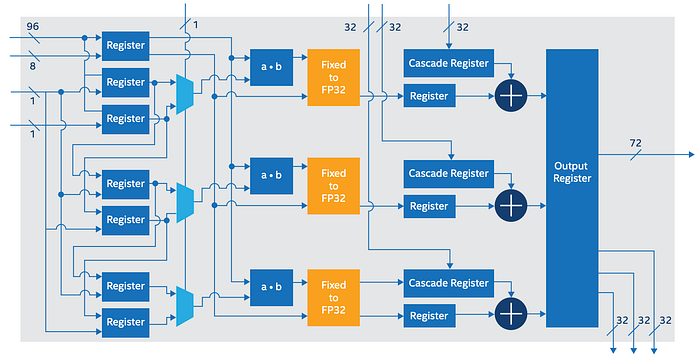

Like GPU, FPGA is originally designed for different markets. FPGA offers fine-grain connectivity among blocks in building customized functions. But this is “fat” for AI chips in some context. The fine grain connectivity responsible for the interconnect increases the die size significantly. It also increases latency and power consumption. AI chips need coarse-grain connectivity with more complex blocks. To address these needs, specialized AI IP blocks are created. For example, Intel Stratix 10 NX FPGA is particularly designed with AI Tensor Blocks. These blocks contain dense arrays of lower-precision multipliers optimized for matrix and vector multiplications with INT4, INT8, Block FP12, or Block FP16 operations. In addition, these tensor blocks can be cascaded together to support large matrices.

DNN layers mapping

However, there is a huge gap between AI models and FPGA programming which speaks about clock and signals. In FPGA, designers use HDL (Hardware Description Language) to describe the hardware design which later compiled into bitstreams to reconfigure the chip. In AI, FPGA vendors heavily invest in bitstreams specialized in optimizing each DL models like ResNet, GoogleNet, etc…

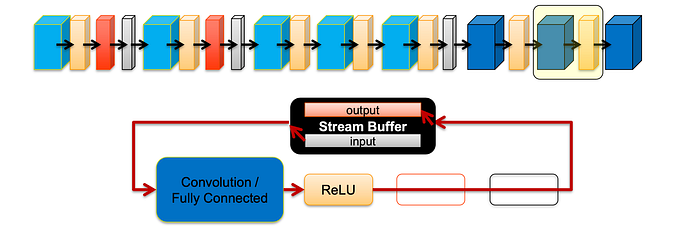

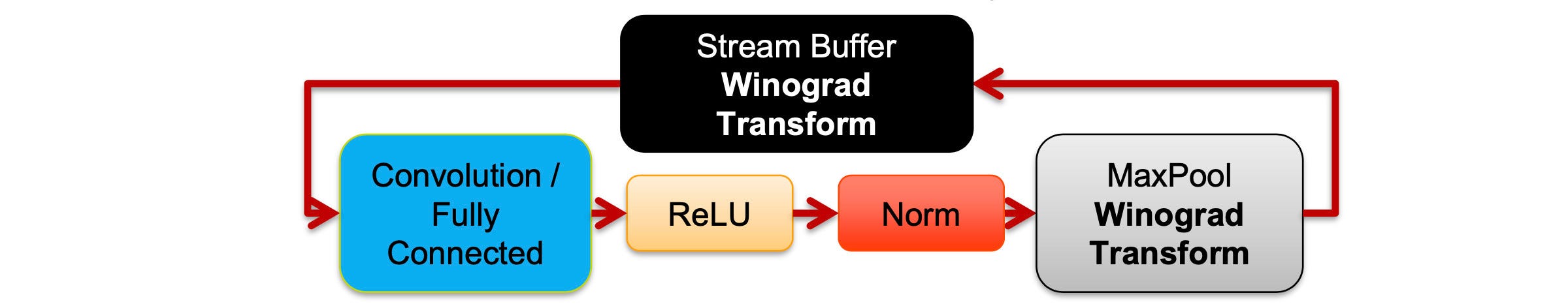

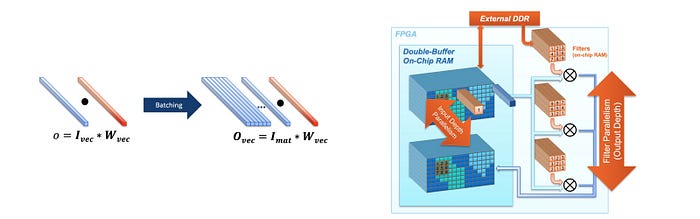

In these bitstreams, DNN layers are mapped into a pipeline for processing, similar to TPU. In the demonstration below, AlexNet layers are grouped into blocks containing the CNN layer, ReLU, normalization, and max pool. Each block will be executed in the pipeline with a stream buffer holding intermediate calculations.

These blocks are highly reconfigurable in runtime and bypassable.

With the flexibility of designing your own hardware, it can implement algorithms, like Winograd Transform in accelerating the CNN convolution.

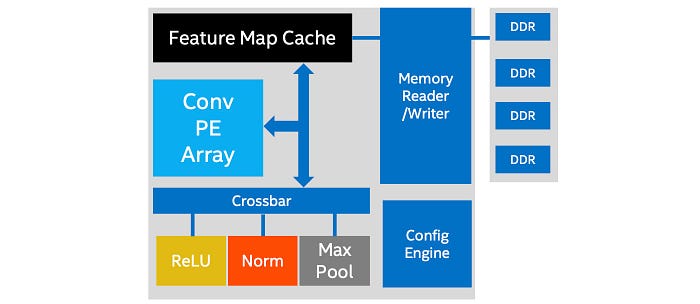

This architecture is realized by having an array of Convolution Processing Element (PE) connected to ReLU, Norm, and Max Pool module through a crossbar. To provide better flexibility that ASIC, custom blocks can be compiled and attached to the crossbar below for new DNN layer designs.

Without starting from scratch, FPGA provides the flexibility of using vertical IP blocks and specialized bitstreams in customizing a new hardware design that aimed for ASIC-like performance. In addition, it can accelerate ML algorithms needed for Microsoft or Facebook data centers beyond the common deep learning algorithms.

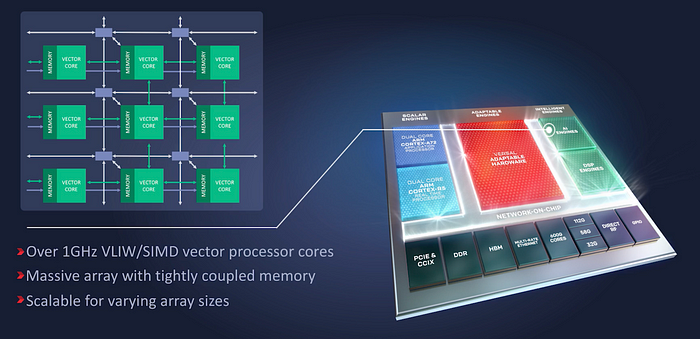

Xilinx ACAP

Xilinx Versal is an Adaptive Compute Acceleration Platform (ACAP). ACAP is a heterogeneous compute platform that combines Scalar Engines, Adaptable Engines (a.k.a. configurable logic block CLB), and AI Engines. We can view this as the AI version for SoC (system on chip). All these engines are interconnected with the network-on-chip (NoC) in achieving multi-terabit communication.

The AI Engines contains an array of VLIW/SIMD vector cores with tightly coupled local memory. Like FPGAs, it is highly configurable for specialized hardware design and it is targeted for DL inference.

Flexibility and Generalization

The flexibility in FPGA helps big software companies to accelerate a specific set of AI applications in their massive data center. Microsoft Brainwave project with Intel is one of them. The power consumption and latency are targeted to match ASIC designs. But the hardware reconfigurability is the key selling point without sticking into one design plan.

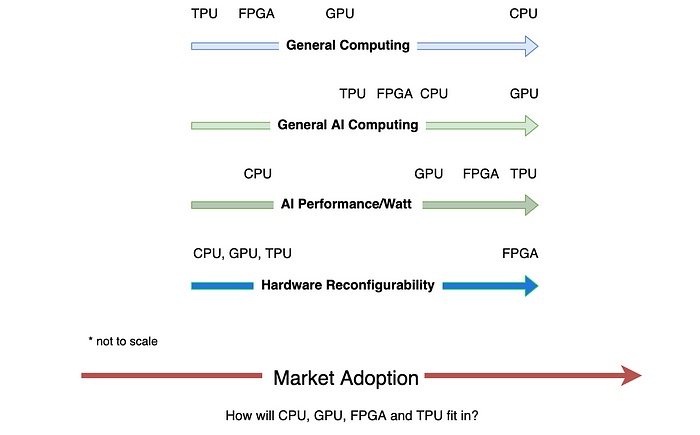

This leads to one critical question for chip companies. Generalization acts against performance and power consumption. What market fit do you want your product to be? How much flexibility are you willing to provide at the cost of efficiency and performance? Is the chip targeted for inference, training, or both? Betting on the wrong spot will cost the future of the product if not the company.

The big high-tech firms keep the status quo and make incremental changes based on their expertise. Many startups cannot fight in their territories and hope to create a new technical paradigm shift to win. This leads us to new architectures in emphasizing dataflow. But before talking about their solutions, let us look at the general issues of the traditional approaches first. For those just interested in what companies are doing, feel free to skip the next section.

Dataflow v.s. Instruction Flow (Optional)

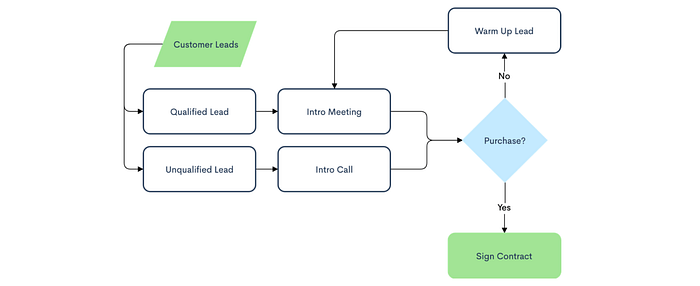

Let’s say a project manager passes you the following business flow to code. How well can the modern computer architecture handle it? The answer is very well.

Indeed, CPU executes serial instructions well and optimizes branching logic with branch prediction and speculative execution. For concurrent processing, CPU throws in superscalar instructions and multiple cores. But DL behaves like a data flow in a computation graph. The data volume, data dependency, and parallelism are far much complex to execute efficiently in CPU.

In GPU or TPU, we improve data parallelism for fully connected layers by increasing the batch size. For CNN, we increase parallelism by exploring the filter depth (i.e. the model width).

But the model depth is not explored by GPU or TPU.

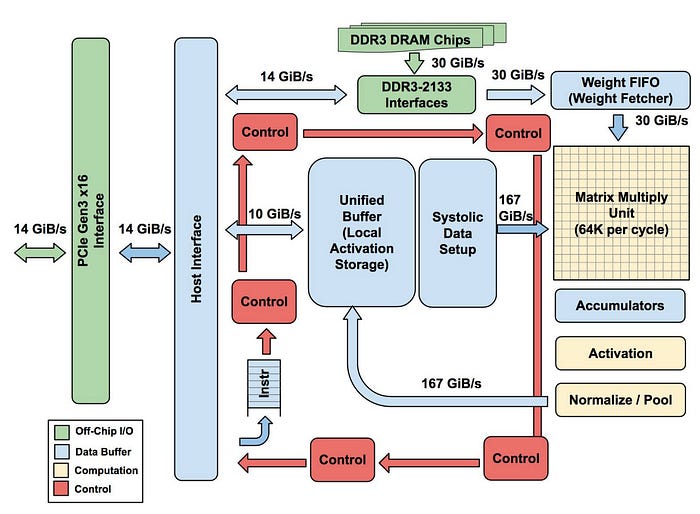

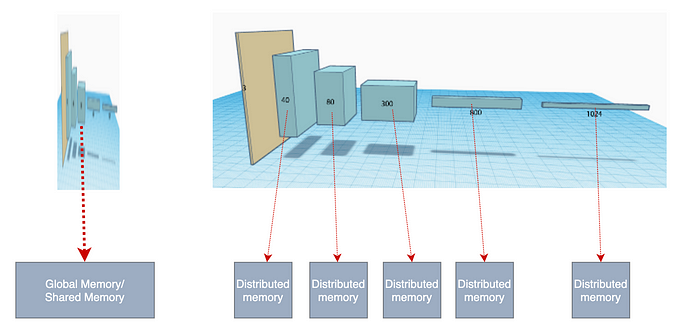

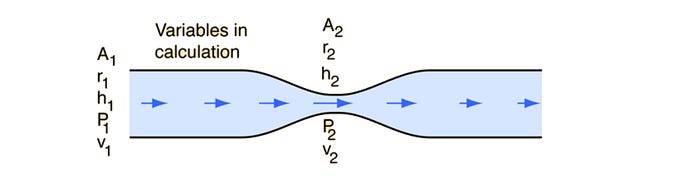

This leads to a big issue that haunts GPU and TPU designers — the memory bandwidth required to support thousands of concurrent operations. Without a spatial dimension concept, we squeeze all the memory requests into a shared global memory resource. We can think naively that we have a large shared on-chip memory to hold all the input, the activations, and the weights.

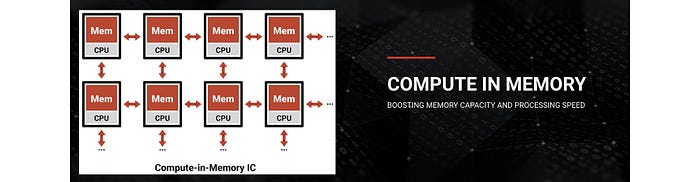

But this central memory will become a major bottleneck and may not be practical. Alternatively, the chip designers implement a complex caching scheme to reduce the memory traffic from the off-chip memory. GPU designers spend a lot of effort and die space in addressing this issue. With the hope that if the batch size and filter depth are big enough, the data locality will be sufficient and the problem can be mitigated by caching. Also, the GPU gives developers more memory structures (like SM’s local shared memory) and cache controls. This improves the cache hit ratio significantly. But many startups bet in a different direction. Their core designs emphasize on distributed local memory to reduce data movement.

There is another mismatch in the programming model. In CPU, instructions read (or write) data in registers coming from (or going) to memory. This enforces a permanent “storage” concept in holding transient calculations. If there is a lot of data, it thrashes the register files or the cache.

On the contrary, the dataflow in a DL model follows closer to a pipeline model, like the one on the right above (i.e. a.k.a. Unix pipes: op1 input | op2 | op3 | op4).

DL Architecture

Many hardware designs are instruction focused. But DL is about data volume. If we cannot handle the data movement nicely, not only performance will be hurt but also the power consumption.

Let’s consider a new architecture approach that

- can utilize distributed memory in

- a pipeline structure.

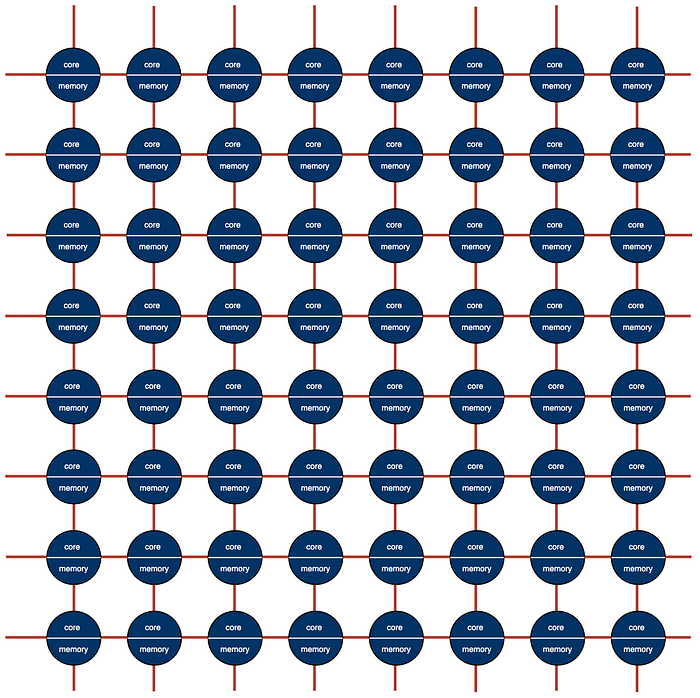

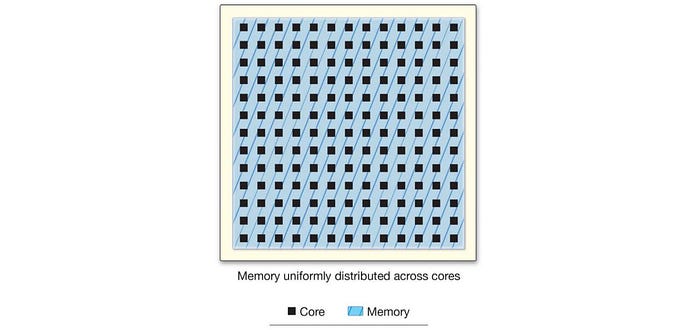

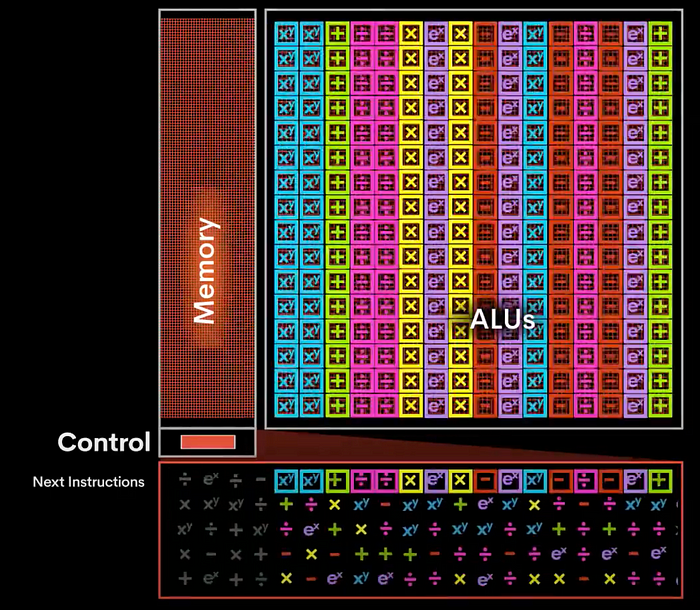

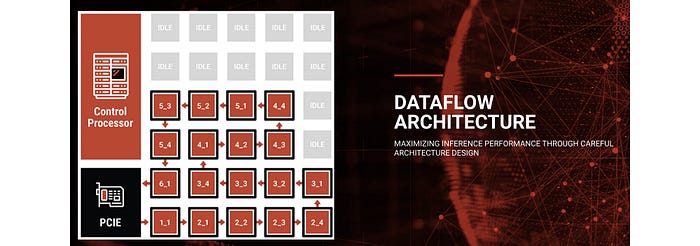

The new design contains an array of cores with distributed memory overlay on top of a network on chip (NoC), say in a mesh configuration. In fact, you will see this often in many startups, just different variants. The cores above are often called tiles. This new architecture reintroduces the spatial structure that puts the model depth back into the equation. Workloads will be run in a pipeline fashion to increase throughput.

This array of tiles can be segmented into sub-regions where each region runs a separate DL model. Or a set of tiles can run multiple models for better tile utilization.

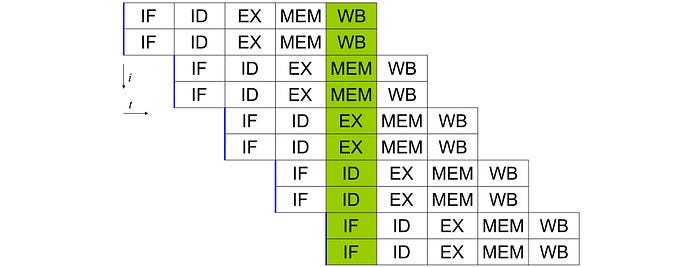

This pipeline concept is similar to the instruction pipeline in CPU but on the macro scale. CPU instruction execution is carried out in stages. Because each stage is running on different parts of the hardware, instructions can be overlapped. For example, the diagram below shows a hyperthreaded CPU that processes 2 instructions concurrently. With pipelining, it completes 2 instructions for each clock cycle. Without the pipeline, it cannot overlap the instruction execution and finish only 2 instructions in every 5 clock cycles.

But in any pipes, you don’t want any bottleneck. In our DL situation, this can be worse if the workload assigned to each tile has an un-proportional amount of loads. This causes congestion and lowers tile utilization.

Since the DL layer designs and model capacity are known ahead, we can depend on the compiler or the runtime library to assign loads properly. For many startups, they are developing advanced compilers in mapping and scheduling the model layers and nodes computation to this array of tiles.

But there are many variants of this architecture. Many differences are subtle but it makes a big difference. So let’s get into the startups in finding these variants.

Graphcore

Graphcore has a 2nd generation Colossus MK2 IPU processor (GC200) as well as the server IPU-M2000 powered by 4 GC200 IPUs. It also has an IPU-POD configuration with a maximum of 64K IPUs. This achievement is clearly reflected in the company 1.95B valuation on Feb 2020.

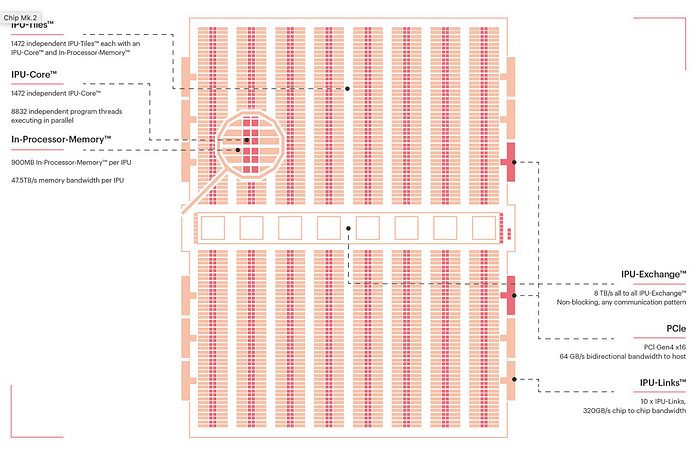

Graphcore designs its own IPU (Intelligent Processing Unit) and it is fabricated by TSMC using a 7 nm process. It uses MIMD (Multiple Instruction, Multiple Data) and has 1472 tiles. Similar to TPU and GPU, threads are oversubscribed to tiles to achieve higher utilization. Each tile can run 6 threads (a total of 8832 threads at the IPU level) and it maintains separate hardware context for threads and multiplexes them onto shared resources.

It has 900MB SRAM in-processor-memory (768KB/tile) at 47.5 TB/s. Each tile has its local distributed memory and no global memory is used. With this distributed concept, the developers are responsible for partitioning their working set across the tiles’ memories and make tiles data exchange for non-local operands. But most complexity will be hidden away from developers by the compilers. (Note: some information in this section is originated from a third-party report. It is an excellent source of information for the IPU design but just be careful that the specification is based on its first-generation chip.)

The IPU supports FP32, FP16.32 (16bit multiply-32bit accumulate), FP16.16 (16bit multiply-accumulate), and stochastic rounding. According to Graphcore, this allows them to keep all arithmetic in 16-bit formats. It is also optimized for model sparsity. IPU cores provide more complex functions than GPU’s SMs. It pays no penalty for branch divergence. Also, each tile can generate a separate random number seed needed for ML algorithms like evolution models (a kind of trial and error methods but using educated guesses.)

Tiles are connected in an all-to-all fashion — any tiles can communicate to any tile in the IPU with short latency. It has 10 IPU link interfaces (video) to support high-bandwidth 320GB/s communications among the IPUs. These factors allow fast memory access in the remote IPUs but more importantly, the high-speed connection transforms a cluster of IPU into a single node. This allows us to map a large DNN easily. It also has 2 PCIe Gen4 for communication with the CPU host.

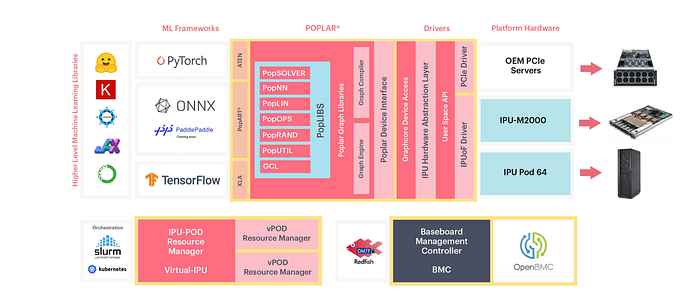

Graphcore uses its Poplar software system to deploy applications to servers. This quote from Graphcore is a good summary of the role of the compiler in this new architecture.

You can use your preferred AI framework, such as TensorFlow or PyTorch, and from this high-level description, Poplar will build the complete compute graph, capturing the computation, the data and the communication. It then compiles this compute graph and builds the runtime programs that manage the compute, the memory management and the networking communication, to take full advantage of the available IPU hardware.

Cerebras

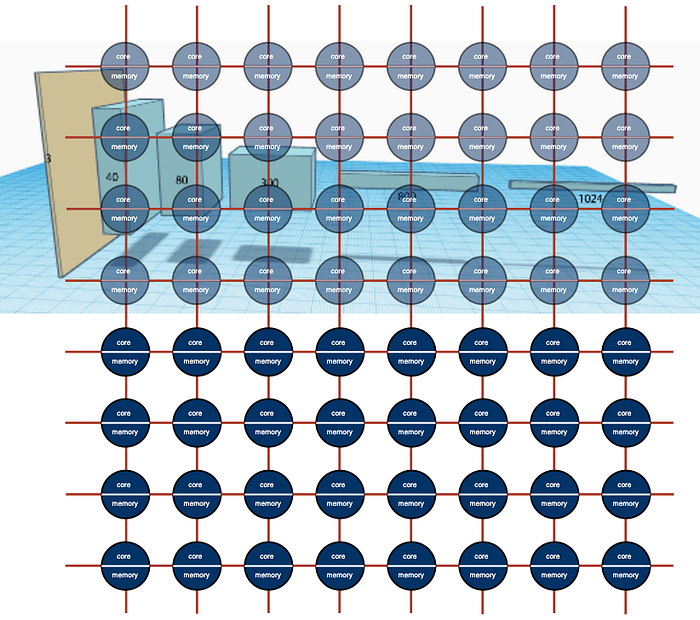

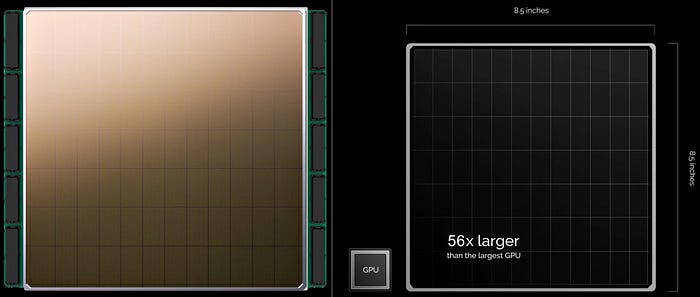

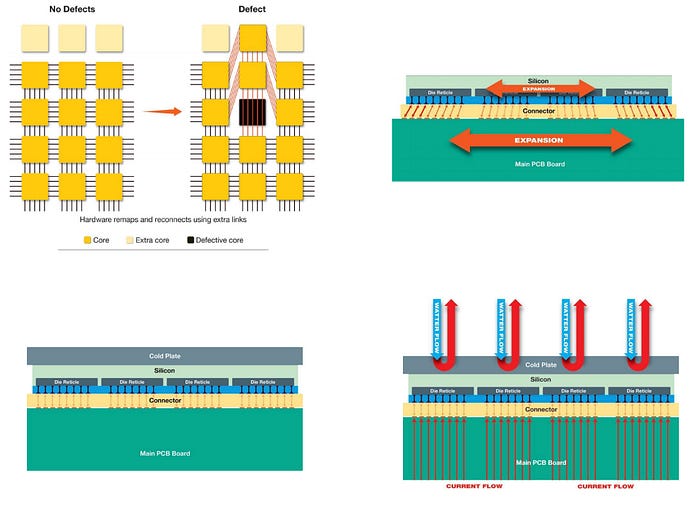

SoC (System on a chip) packs the majority of computer components on a single die which is later packaged onto a chip. But why do we stop our imagination there? In DL, we utilize a cluster of nodes in training complex DL models. Instead of cutting the dies off from the wafer, Cerebras builds a cluster of systems on a single wafer. That is the key idea behind Cerebras Wafer Scale Engine (WSE).

This wafer contains 84 “chips” (as shown above as 7×12), a total of 400K cores, and 18GB on-chip memory.

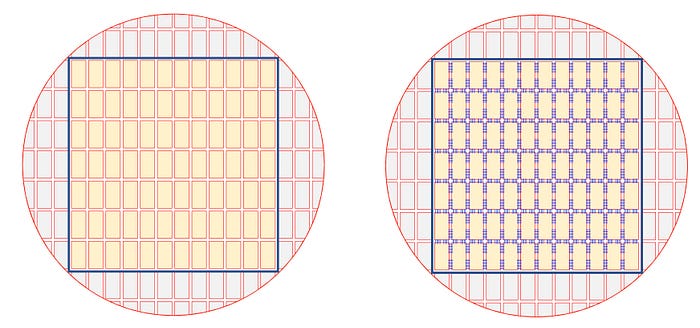

In standard chip fabrication, scribe lines separate each die for later cutting.

In WSE, wires are added on top of the scribe lines to form a 2D mesh network. Communication is done directly by hardware with small single-word messages. This network achieves 100 Pb/s ultra-high bandwidth with short latency. In fact, there is no difference between the connectivity between cores and across scribes. The whole wafer can be viewed as a large array of cores.

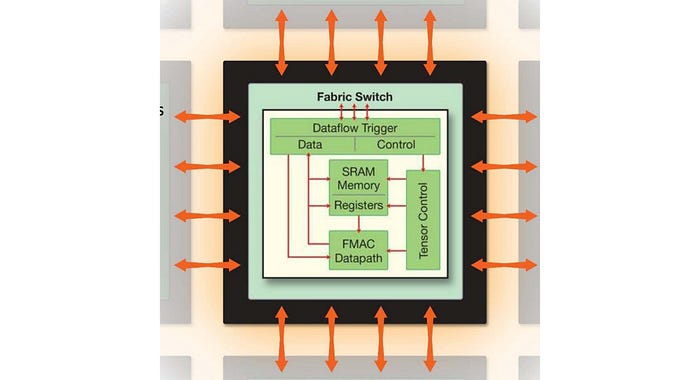

WSE contains 400K cores handling general instructions including arithmetic, logical, load/store, and branch operations. It also includes tensor operations for DL acceleration. Each core is run independently of others. It allows heterogeneous workloads to be run on the cores concurrently. And multiple cores can work in parallel in handling one DNN layer.

WSE also adopts distributed memory that spread over the wafer without a central memory.

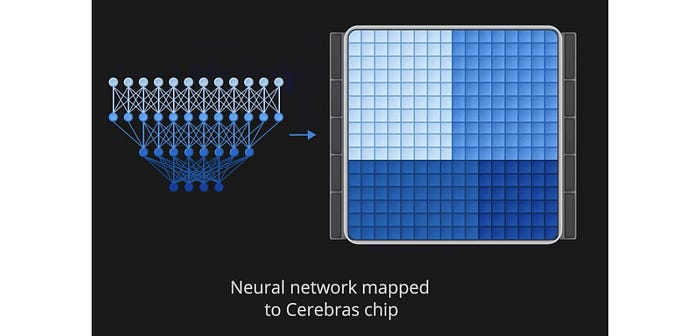

The whole DNN will be mapped and run on a single wafer. This takes advantage of the large on-chip memory as well as the high-bandwidth and low-latency communication between cores at the silicon level.

The Cerebras Graph Compiler (CGC) translates the DNN to an optimized WSE executable. Kernels corresponding to each DNN layers are sized. More complex workloads will be assigned with larger groups of cores. CGC then generates a placement and routing to minimize communication latency between the layers. (Polyhedral compilation techniques are applied here.)

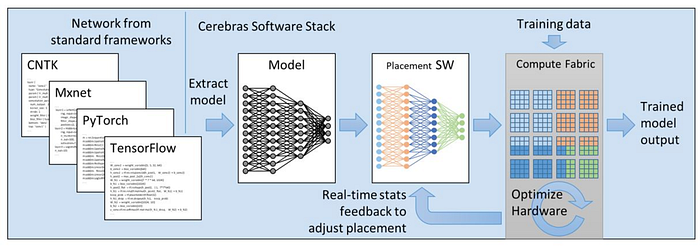

The DL model is extracted from standard frameworks and convert to the Cerebras Linear Algebra Intermediate Representation (CLAIR).

I will quote directly from here for its compiler strategy. It explains more details on the tasks and the requirement of the compiler. (The massive array of cores are referred to as compute fabric here.)

CGC performs a matching and covering operation that matches subgraphs to kernels from the Cerebras kernel library. … The result of this matching operation is a kernel graph.

Using its knowledge of the unique WSE architecture, CGC then allocates compute and memory to each kernel in the graph and maps each kernel onto a physical region of the computational array of cores. Finally, a communication path, unique to each network, is configured onto the fabric.

…

During this compilation process, kernel placement is formulated as a multi-constraint problem on 1) memory capacity and bandwidth, 2) computation requirements, and 3) communication costs. The placement engine takes into account both algorithmic efficiency and compute core utilization to generate a result that maximizes locality, minimizes communication requirements, and avoids hotspots and contention. The final result is a CS-1 executable, ...

The core can process sparse models natively in hardware for high speedup. The dataflow and computation are triggered by data. Zero data branches are filtered out to avoid unnecessary processes. Because cores run independently, the dataflow can run continuously whenever possible without synchronizing with other cores.

This is an example in which some designers are exploring native hardware support in scheduling and routing in handling sparsity.

Manufacture Challenges (Optional)

Using a whole wafer to build a system has been attempted before AI chips. But there are many challenges that need to solve including manufacturing yield, thermal expansion on the package, package assembly, and power and cooling. Cerebras solves them by adding fault tolerance design, developing custom connectors between layers (top right below), and customizing new packaging tools for precise alignment. To cool the silicon, a cold plate is placed on top, and power is supplied underneath it.

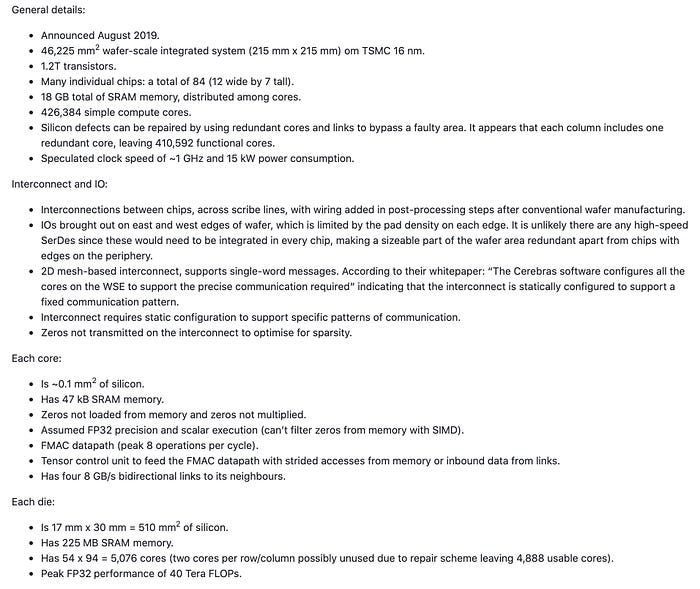

Specification (Optional)

Here is the general information available from a third-party source. In this article, we will present some high-level specifications. Feel free to skip or browse through them according to your interest.

Groq

Here is a nice video summarizing the design concept of the Groq’s Tensor Streaming Processor (TSP).

As demonstrated from the video, it has a slightly different pipeline structure. The pipeline moves from the left to the right in which cores in the same column execute the same instruction (SIMD). The TSP contains a single processor that has hundreds of functional units.

The majority of the control logic and cache is gone. It strongly depends on the compiler and the host in issuing instructions. This greatly reduces instruction-decoding overhead.

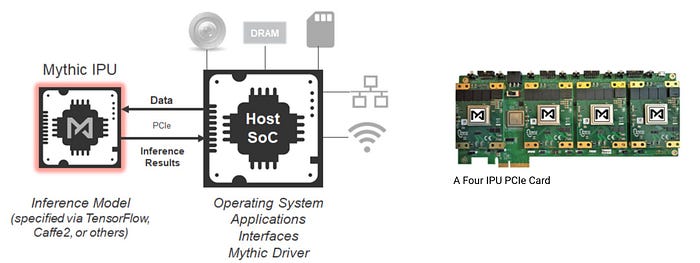

Mythic

Mythic chips are tile-based that features three fundamental hardware technologies:

- Compute-in-memory,

- Dataflow architecture and

- Analog computing.

We discuss the first two concepts already. So we will be brief. The first one uses localized memory.

The second one uses the dataflow concept to run the DNN graph.

As quoted from Mythic, this is how the pipeline works with a little bit more detail.

When the results from one graph node are completed, they flow to the next graph node to start the next operation, which is ideal for dataflow architecture. In our dataflow architecture, we assign a graph node to each compute-in-memory array and put the weight data for that graph node into that memory array. When the input data for that graph node is ready, it flows to the correct location, adjacent to the memory array, and then is executed upon by the local compute and memory.

Their third innovation is quite interesting. Instead of using a digital circuit design, it tunes the value of resistors according to weights and uses the input value to control the voltage. The output current will determine the computation result. Here are the details from Mythic.

Our analog compute takes compute-in-memory to an extreme, where we compute directly inside the memory array itself. This is possible by using the memory elements as tunable resistors, supplying the inputs as voltages and collecting the outputs as currents. We use analog computing for our core neural network matrix operations, where we are multiplying an input vector by a weight matrix.

First, it is amazingly efficient; it eliminates memory movement for the neural network weights since they are used in place as resistors. Second, it is high performance; there are hundreds of thousands of multiply-accumulate operations occurring in parallel when we perform one of these vector operations.

Hailo

Hailo applies pipeline architecture using distributed memory also. But it focuses on the edge device for inference.

By moving the memory closer to the core, it allows for very low power consumption for the edge device. This is a nice animation on how DNN is mapped into a resource graph that eventually mapped into the processor cores.

More …

This is Part 1 of the “AI Chips Technology Landscape”. There are plenty more for our discussion. In part 2, we. want to discuss SambaNova founded in 2017. It is one mystical AI chip company with about half a billion VC investment. But their approach can be quite revolutionary, except no outsiders know what exactly it is. But that does not mean we cannot put on our investigation hats. In fact, we will go deep in looking at the co-founders’ researches at Stanford.